Managing Azure Functions logging to Application Insights

The Azure Functions teams have made it incredibly easy to emit telemetry to Application Insights. It really is as easy as update the Function App’s settings as described by the App Insights wiki page over at Azure Functions on github. However, if you are on the basic pricing plan for Application Insights then the 32.3Mb daily allowance gets used up pretty quickly.

The remainder of this post is about understanding the telemetry data sent to Application Insights by Azure Functions and how to configure the function app host.json to filter and reduce the volume of telemetry sent.

The naïve approach

Aka, I haven’t got a clue, I removed all log.info(“informational message”) from my function code. While it certainly helped me to better understand the Azure Functions runtime emits a lot of telemetry, it did not make significant impact to the overall volume of telemetry sent. Furthermore, it is not the best approach, as will become clear.

What to do?

It is pretty easy to find information about the host.json settings that influence telemetry sent by Azure Functions, however, I feel there is an assumption one understands logger configuration and the telemetry sent in the first instance. Therefore, regardless of what I found, the problem really was relating this content to the telemetry itself.

In hindsight, the reference docs section of the App Insights wiki page was useful. Similarly, for the logger section of azure functions host.json page. Eventually, when reading the filtering section of azure webjobs application insights integration via stack overflow question azure function verbose trace logging to application insights, the penny dropped. Go and find out the telemetry’s category.

Revisiting application insights

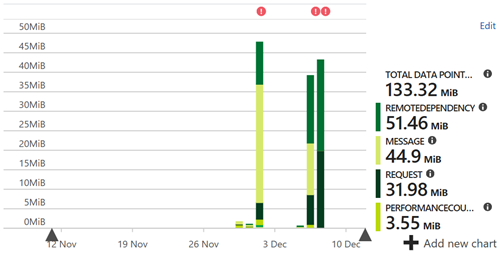

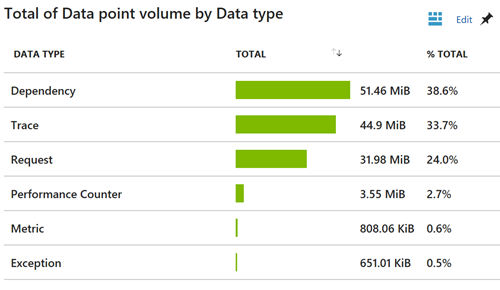

The Data Volume Management blade in Application Insights presents a chart of Daily data volume for the past 31 days. Clicking on this chart opens the Data point volume blade, presenting an further chart breaking this down by Data type. Both these charts are shown below.

This shows very clearly the overall distribution of telemetry sent. In addition to the standard telemetry emitted, dependency tracing has been implemented, and is the most important telemetry item for my purposes. Trace telemetry is pretty big and it would be great to reduce it. As can be seen in the top chart, it can be done, but I jump ahead of myself.

Application Insights Analytics

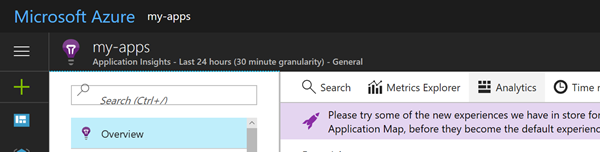

The Application Insights GUI is very useful for drilling into telemetry to find specific items, but less useful if you need to explore properties across the entire set. Application Insights Analytics is a better tool for this type of work. If you are not familiar with this tool, it provides a query like interface over Application Insights’ telemetry, allowing quick drill down to isolate interesting items or produce custom charts that can be pinned a dashboard. It can be accessed in a number of ways, the simplest being to click Analytics from the Application Insights Overview blade as shown below.

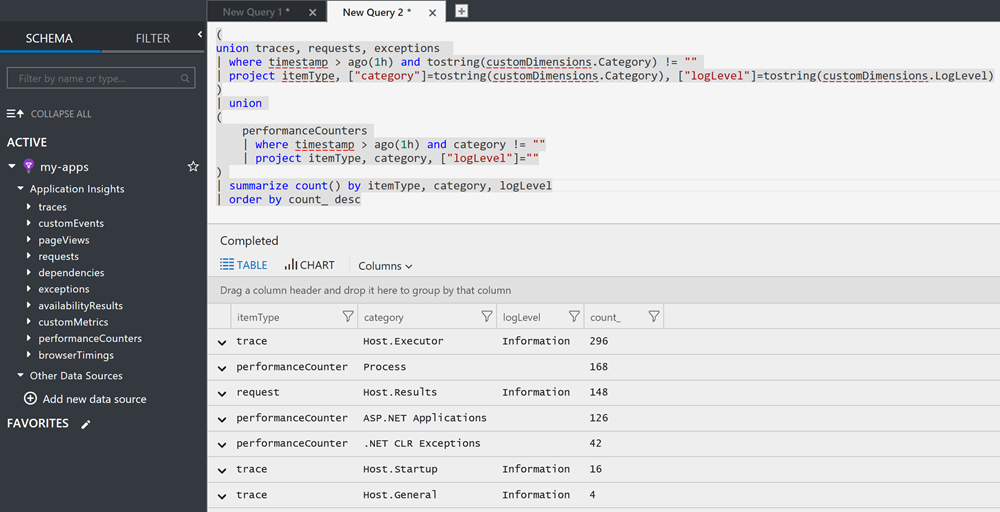

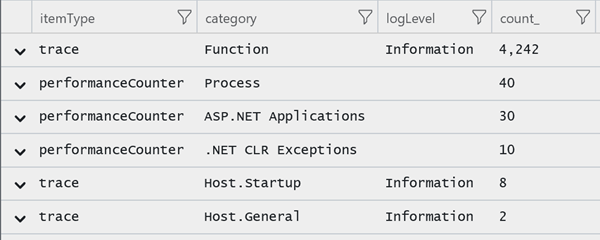

A telemetry summary (and query), following a short function run, is shown below. The summary is by telemetry type such as requests, traces or performanceCounters, then category and logLevel. These are the standard telemetry output by the function host. A telemetry type may map to a single category or multiple categories, for example, requests map to the category Host.Results, while traces maps to a number of categories, for example, Host.Executor, Host.Results, Host.Startup and Host.General, with Host.Executor emitting the majority of messages.

Form my purposes I am not interested in the Host.Executor category which is in the main function start and end information trace. I am also not interested in the Host.Results category. mainly because I have implemented a dependency telemetry and so it is duplication.

Configuring host.json

Now that I know the telemetry categories I want to filter, all that is left is to update the function app host.json to include the following snippet to configure the logger with a category filter. The filter has a default log level of information or higher across all categories. However, the host executor and results categories are overridden to only log error or above.

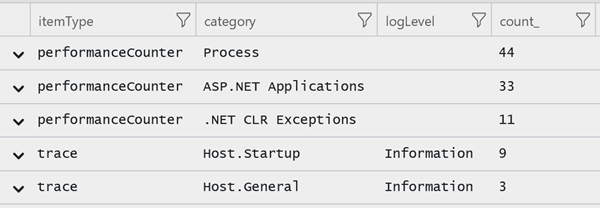

The telemetry summary following a rerun of the function for the same duration is shown below, and as can be seen requests and traces (relating to Host.Executor) are absent. Traces generated by the function now appear as the function code was reverted, and is significant.

In summary

All that is left now is to filter out the the function’s information logging, the right way!

Et voilà.