Why is Sqlpackage Using All The Build Server Memory?

Sqlpackage can be particularly resource-intensive when scripting a database that has a considerable amount of objects. In this post I'm going to discuss the options available when scripting out a database deployment file from a dacpac when using sqlpackage.exe. I'm also going to investigate how resource intensive they are and what we can do to limit the hardware resources used and how much of an impact this has on our waiting times, with some interesting results on where we were taking the performance hit.

Recently I've noticed that when we have more than one build running at the same time on the same build server the builds were never finishing. They were just hanging on scripting out our databases. In our builds, we use a custom target to script out our database deployment file by comparing the solution to a dacpac which is in our source control. The sqlpackage command is below:

"C:\Program Files (x86)\Microsoft SQL Server\110\DAC\bin\sqlpackage.exe" /a:Script /sf:"C:\Database\bin\x64\debug\db.dacpac" /tf:"C:\Database\Baselines\db_BaseLine.dacpac" /pr:"C:\Database\db\Build.publish.xml" /op:"C:\Builds\bin\x64\debug\deploy.db.sql"

There's plenty of info on Sqlpackage on the MSDN website, but broadly I am using the following parameters:

- /action: we want to extract the dacpac to a location. We are using the script option. The other options available for the action parameter are: Extract | DeployReport | DriftReport | Publish | Script | Export | Import

- /sf Specifies a source file to be used as the source of action instead of database.

- /tf: short form for the parameter /targetfile. This is the location of where we want to create the dacpac to. If a dacpac of the same name already exists then it will overwrite that dacpac.

- /pr: Specifies the file path to a DAC Publish Profile. The profile defines a collection of properties and variables to use when generating outputs.

- /op: Specifies the file path where the XML output files are generated.

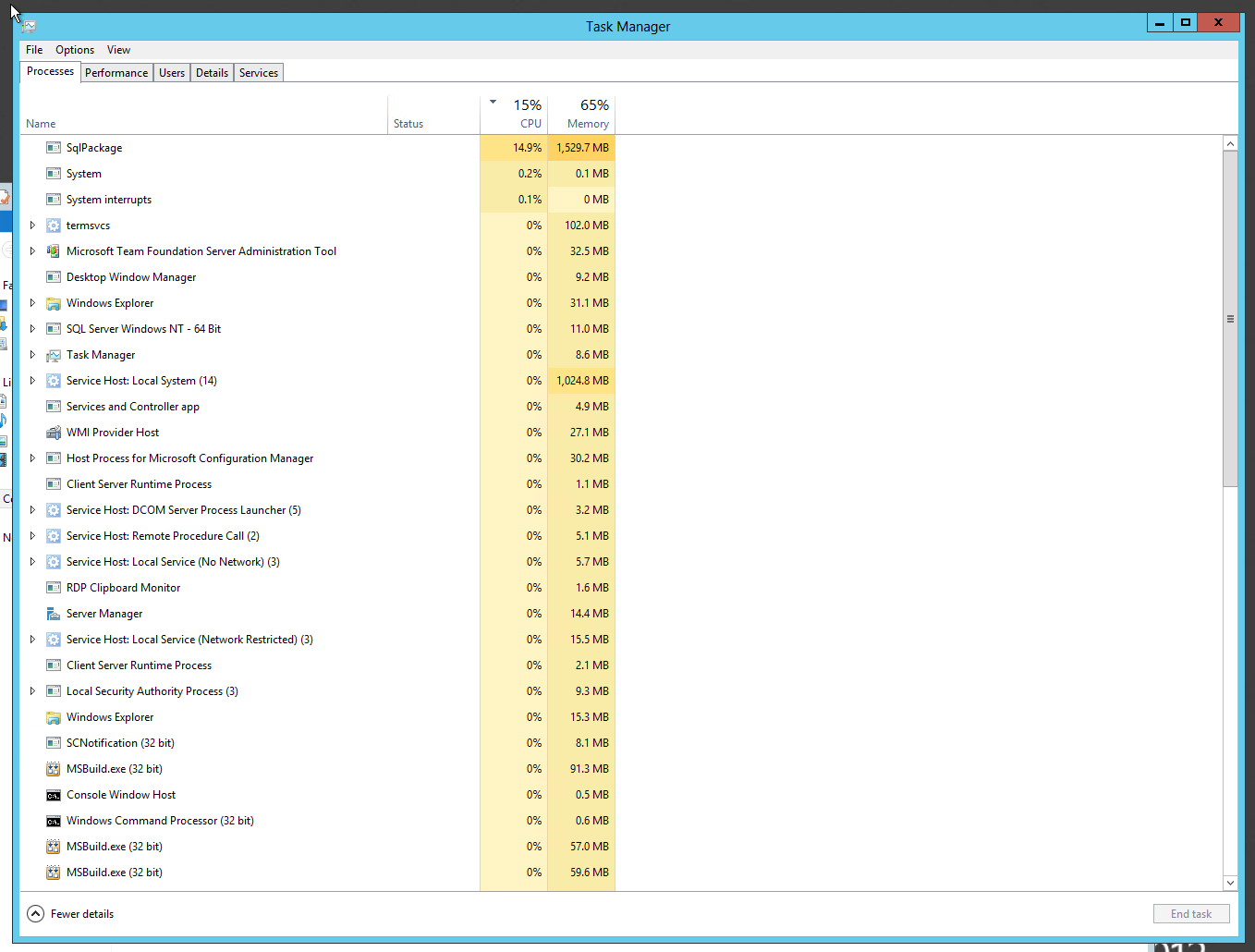

So logging onto the build server I noticed that the memory usage for sqlpackage was at 95% of the total memory on the server! This equated to well over 5GB, and I'm sure if the server had more memory the sqlpackage cmd would take more.

I stopped the builds and did some more checking on the MSDN page. Turns out that there is an option to define where the elements are stored when building the database model, and that for performance reasons the default is "Memory".

- /p:Storage ={ File | Memory }

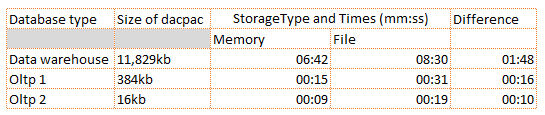

However, one of our databases is a large data warehouse with many partitions. As we build from dacpacs, we can infer the size of the database model from the size of the dacpac:

- data warehouse dacpac: 11,829kb

- oltp1 database dacpac: 384kb

- oltp2 database dcapac: 16kb

Clearly all these partitions are in the meta data of the dacpac. That dacpac is huge! I had a reckoning that we were experiencing memory issues when scripting out this database. However it's not the dacpac of the baseline itself that causes the issue but the model in the database solution itself. I appended /p:Storage=File to the end of my sqlpackage command and ran the build. This time when the script part ran it took 1.5GB of Memory, some 30% less than the Memory option.

So I added this to our builds and since then we've been able to run more than one build and they run successfully, albeit slower than before. But what about performance?

Taking One For The Team: Performance

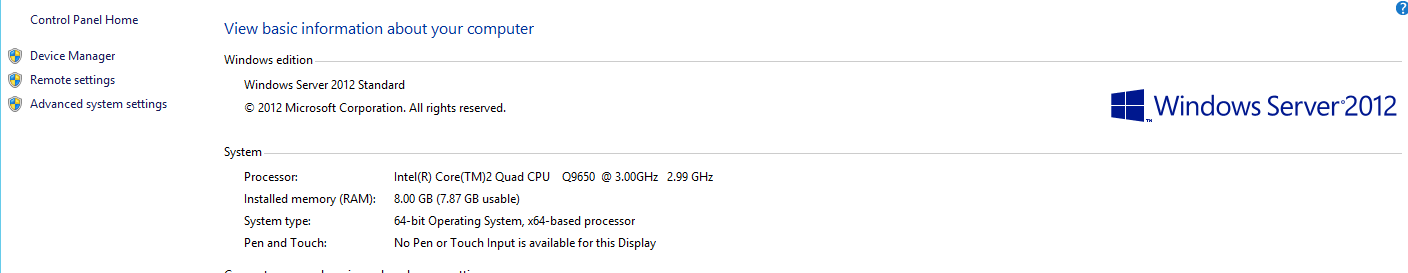

The build server is hardly decent; in fact its a desktop that has been configured to be a build server ( Dell Optiplex 960)! The specs are

- 8GB of RAM

- SATA2 connected 7200RPM 250GB 3.5 HDD

- Intel® Core™2 Quad Processor Q9650, released some 5 years ago!

Clearly this is not an ideal build server in 2013. Moving the database out of memory onto a slow disk like this is definitely going to affect performance considerably.

Surely?

Well, unsurprisingly, it does make the build run slower. But not as much as I thought. I wanted to delve deeply into where we were losing the performance. Here is the overall speed when running the script InMemory:

and this is the overall speed when we use the File option:

About 40 seconds here, not too bad. But let's find the actual target in the build and check the speeds reported in the build log, as this is too high a level to gauge the actual performance difference. Apart form scripting, we are getting the source form TFS, we're compiling solutions, copying files etc, so lets dig deeper....

In memory, scripting the database took 8 minutes 38 seconds for three databases, including the one very large data warehouse.

502923 ms GenerateScript 1 calls

When using the file option, scripting the database took 9 minutes 35 seconds for three databases, including the one very large data warehouse.

561367 ms GenerateScript 1 calls

Let's not stop here though, let's break it down even further. We batch the commands in the build to speed up the build, so if I run each one from the cmd line I can compare the times for the databases. The results from running manually are below:

The results here are interesting: clearly we can infer that the data warehouse would be quicker if this server had more memory. The bottleneck here is that we just don't have the amount of memory that sqlpackage wants to use to use place the whole database in memory.

The Oltp databases are twice as quick when using the InMemory option, and use twice the amount of memory than the file option.

Get ready for a major remodel, fellas. We're back in hardware mode

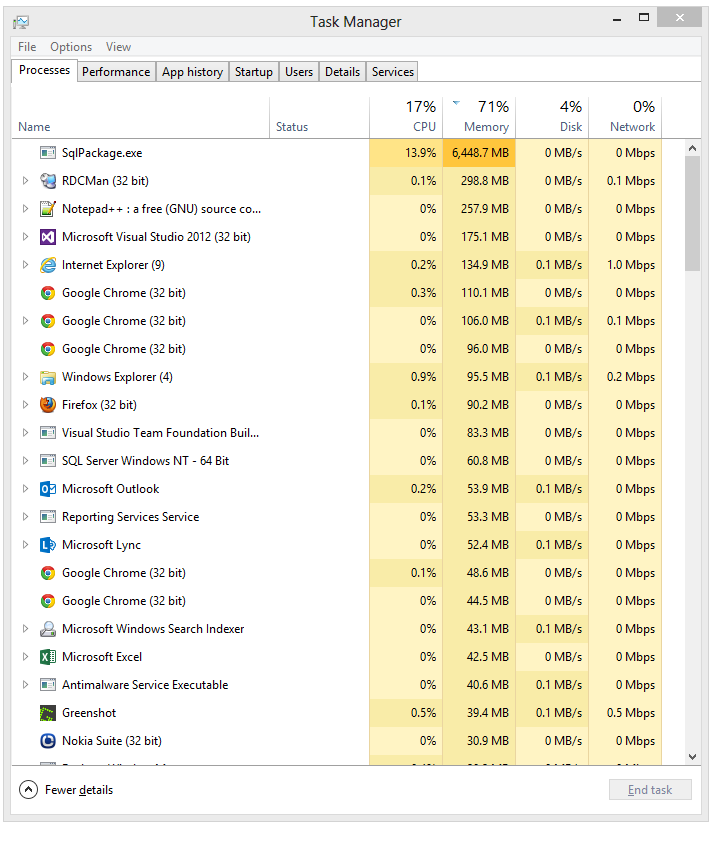

To verify the performance I'm going to run the build locally with the Storage set to File and to Memory to see what the performance difference is. The desktop that I use is a pretty up-to-date hardware wise: i7 processor, 16GB of memory and RAID 0 SSDs:

Which is considerably more powerful than the build server, an Optiplex 960:

By using Geekbench and Crystal Disk Mark tooling I can compare the difference in the performance. Geekbench is a great tool that checks your CPU and memory performance, whilst Crystal Disk Mark is a disk performance benchmarker.

This is my desktop:

and this is the build server:

You can see the full details of the run on the Geekbench website. This is the link for the Optiplex 960 and this is for the desktop.

The difference in having RAID 0 SSD over a single HDD is incredible! Even if I didn't have 16GB of DDR3 RAM then the SSD would make a major difference.

Let's look at the results:

Big difference in the speeds for the data warehouse. Even when using the file option, the faster machine outpaces the build server in memory. And when in memory, the Memory usage peaks at 6.4GB.

Unsurprisingly, throwing money at the problem and upgrading the build server hardware has improved performance greatly.

Summary

Sqlpackage is a highly configurable command line utility which we can leverage in builds to automate database scripting and deployment. It can however use considerable resources when building the database model for very large databases. What constitutes a very large database depends on the meta data within the dacpac and the structure of the model within the solution as opposed to the size of the data contained within. Clearly it's important to manage the structure; one way in which to aid this is to use composite database solutions. This is especially useful when managing databases with partitioned tables.

The build servers I have at my disposal are not great, and if I had the opportunity I'd have a ratio of RAM to Cores as 8:1, rather than the 2:1 ratio that I currently have. On the 4 core machine I have now that would mean 32GB, which is hardly expensive, and this would allow me to run the SqlPackage command InMemory as opposed to writing to file. Or failing that using SSDs. The run on my desktop showed that upgrading the hardware would make considerable difference. Whinging aside, the File option has proven to be a suitable alternative when upgrading the memory in the server is just not an option.

MSDN Walkthrough: Partition a Database Project by Using Composite Projects